Publications

* indicates equal contribution

Discover more on Google Scholar

2026

Reasoning as Gradient: Scaling MLE Agents Beyond Tree Search (MLE Setting of RD-Agent)

Findings of ACL 2026

📈 Gradient-based optimization scales better than tree search with stronger reasoning models

We introduce Gome, the MLE setting of RD-Agent, mapping diagnostic reasoning to gradient computation, success memory to momentum, and multi-trace execution to distributed optimization. Under a closed-world protocol, it reaches 35.1% any-medal rate on MLE-Bench with a 12-hour single-V100 budget, and scaling experiments show that gradient-based optimization increasingly outperforms gradient-free tree search as model reasoning capabilities improve.

FT-Dojo: Towards Autonomous LLM Fine-Tuning with Language Agents (Fine-Tuning Setting of RD-Agent)

ICML 2026

🤖 Benchmarking autonomous end-to-end LLM fine-tuning

We introduce FT-Dojo, the fine-tuning setting of RD-Agent, with 13 tasks across 5 domains for autonomous LLM fine-tuning, together with FT-Agent, a purpose-built system that achieves the best performance on 10 of 13 tasks and iteratively improves strategies from evaluation history.

2025

R&D-Agent: An LLM-Agent Framework Towards Autonomous Data Science (Technical Report)

arXiv preprint 2025

🏅 Top-performing open-source framework on MLE-Bench

A top-performing open-source framework on MLE-Bench that organizes autonomous data science into two parts: Research for proposing ideas and Development for converting them into executable workflows and solutions.

arXiv preprint 2025

🚀 Fully-automated and data-uncontaminated benchmark for LLM agents in finance

A comprehensive benchmark for evaluating autonomous trading agents in real-time financial markets across NASDAQ 100, SSE 50, and cryptocurrency markets, enabling fair comparison of AI trading strategies with zero human intervention.

NeurIPS 2025

🏆 Best Paper Award, ICLR 2025 Workshop on Advances in Financial AI (1/53)

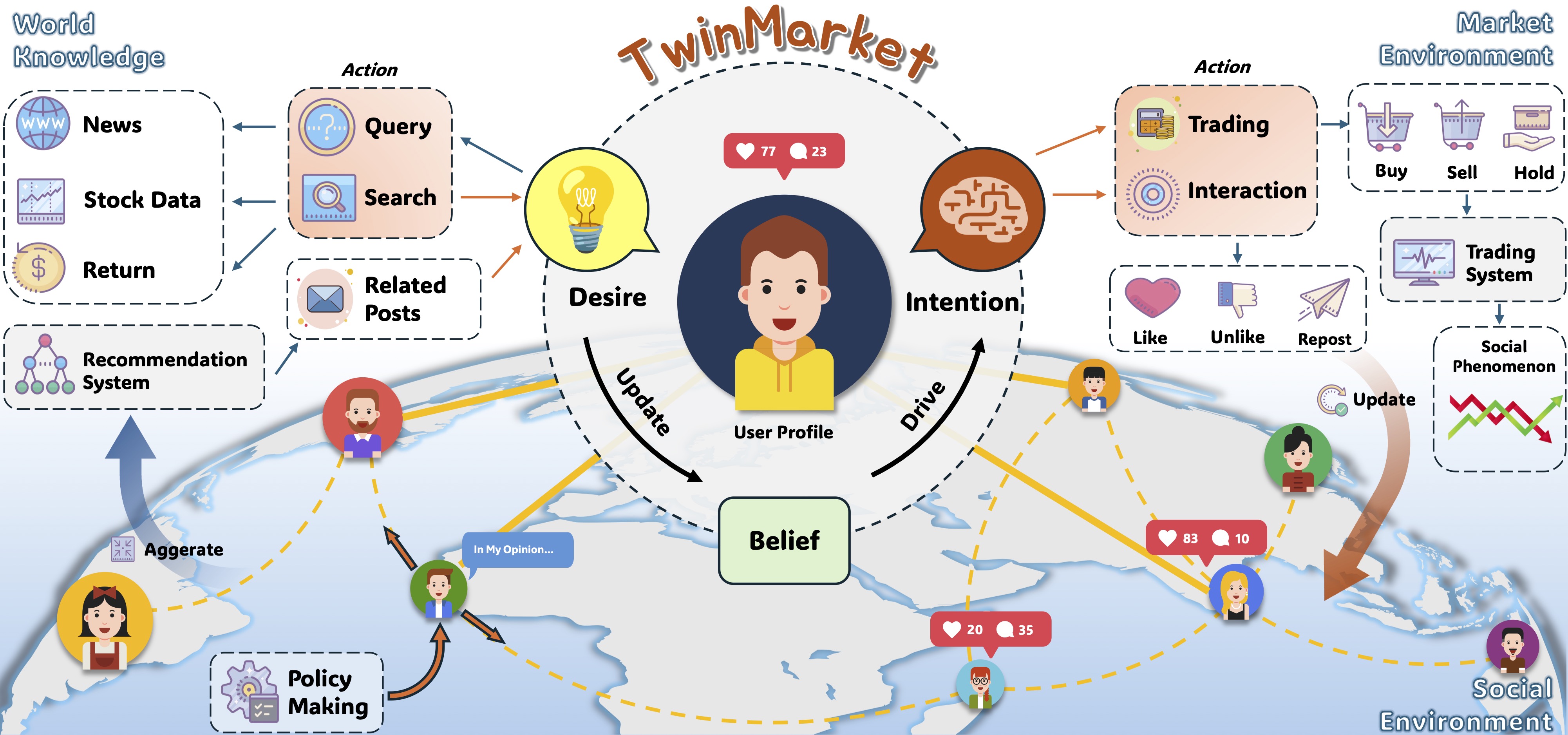

A multi-agent simulation platform for financial markets that integrates behavioral economics and social network dynamics, enabling large-scale market experiments with diverse agent behaviors.

Findings of NAACL 2025

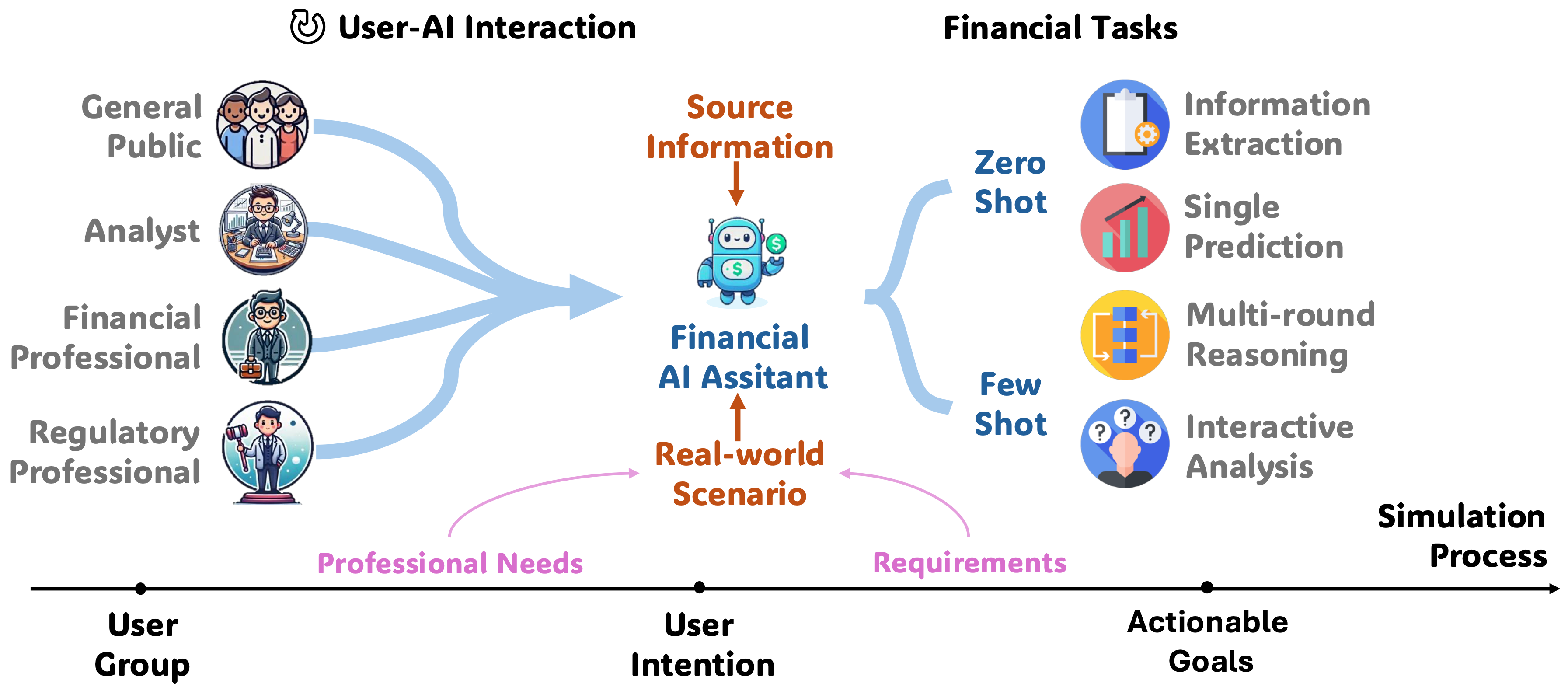

A comprehensive benchmark evaluating LLMs' financial expertise from a user-centric perspective, covering domain knowledge, application capabilities, and trustworthiness across 300+ real-world questions.

Separating Skill from Luck: LLM-Based Belief Extraction and Investment Ability Measurement of Financial Influencers

Chengdu Four-University Joint Finance Forum & The 7th Finance PhD Academic Forum, 2025

🏅 Outstanding Paper Award (2/20)

Analyzes 10M+ posts from 60,000+ financial influencers on Xueqiu using LLMs to extract stock expectations, combined with finite mixture models to separate skill from luck. Findings reveal only 46.63% possess positive investment ability, while following top 10% yields 22.4 bps daily returns.

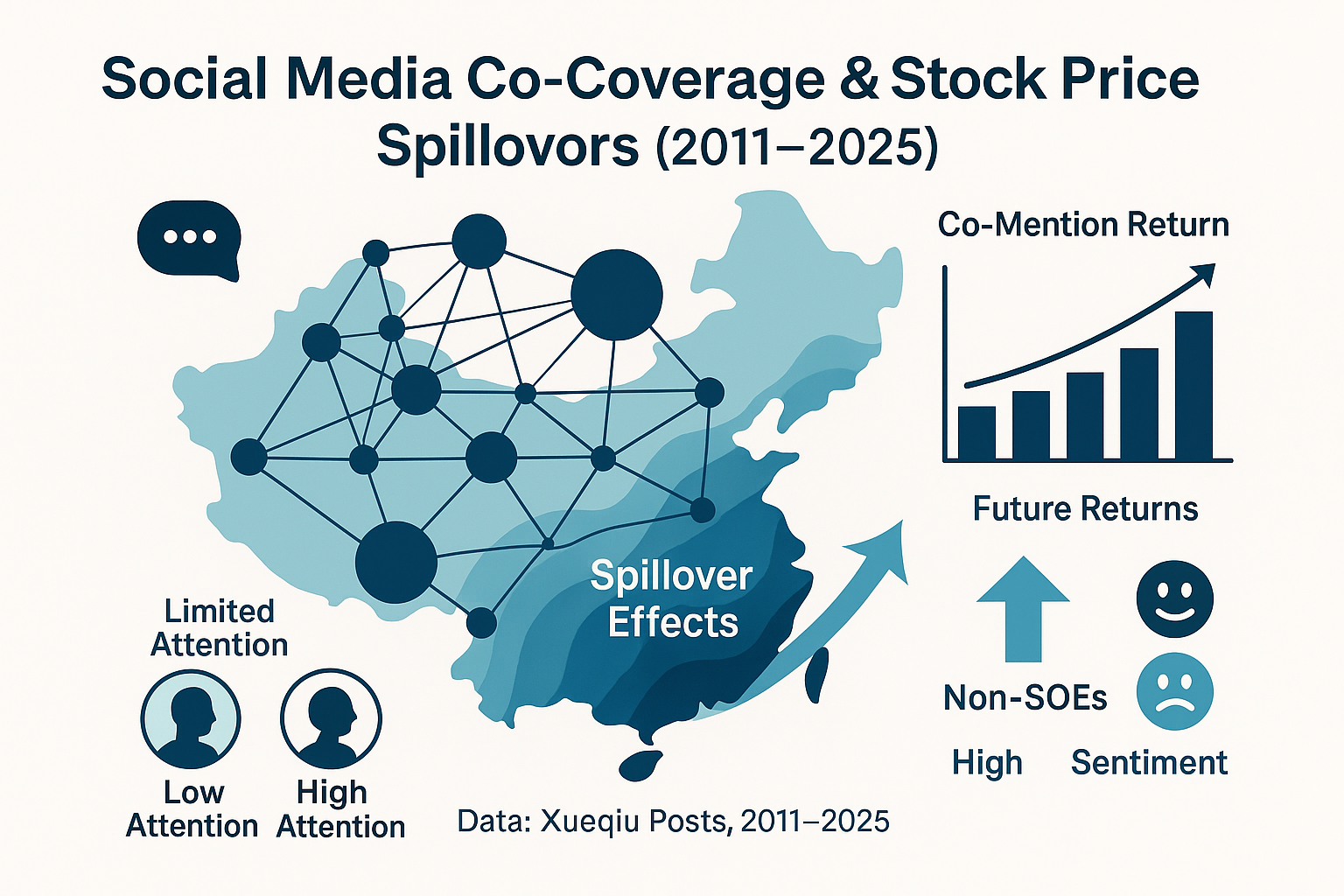

Shared Fortunes and Risks: Stock Price Spillover Effects of Corporate Ties — Evidence from the Chinese Social Media Platform "Xueqiu"

The 7th Conference of the Chinese Society of Optimization, Overall Planning, and Economic Mathematics, 2025

🎖️ Outstanding Paper Award (10/190)

Investigates stock price spillover effects through corporate network ties on social media, revealing how social connections influence market dynamics and investor behavior.

2024

arXiv preprint 2024

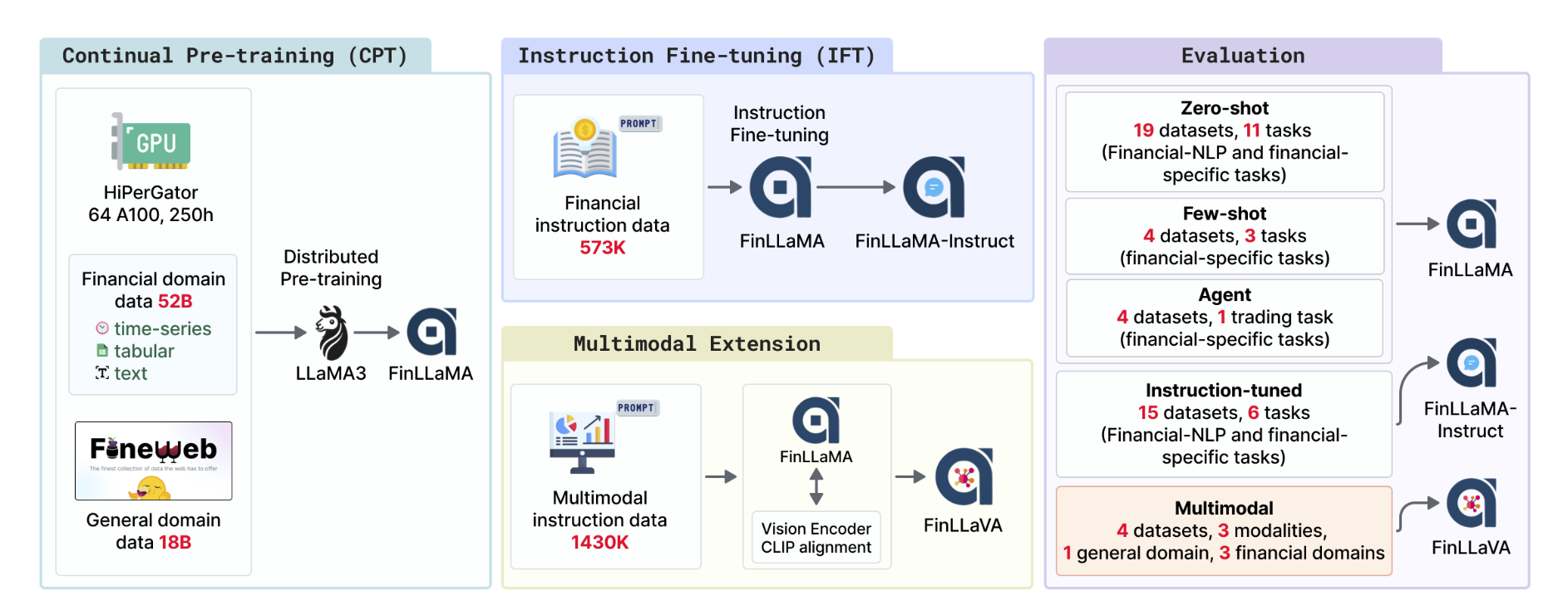

🎯 First open-source financial multimodal LLM: FinLLaVA-8B

A series of Financial LLMs including FinLLaMA (pre-trained on 52B tokens), FinLLaMA-instruct (573K instructions), and FinLLaVA (first open-source financial multimodal LLM) trained with 1.43M image-text instructions.

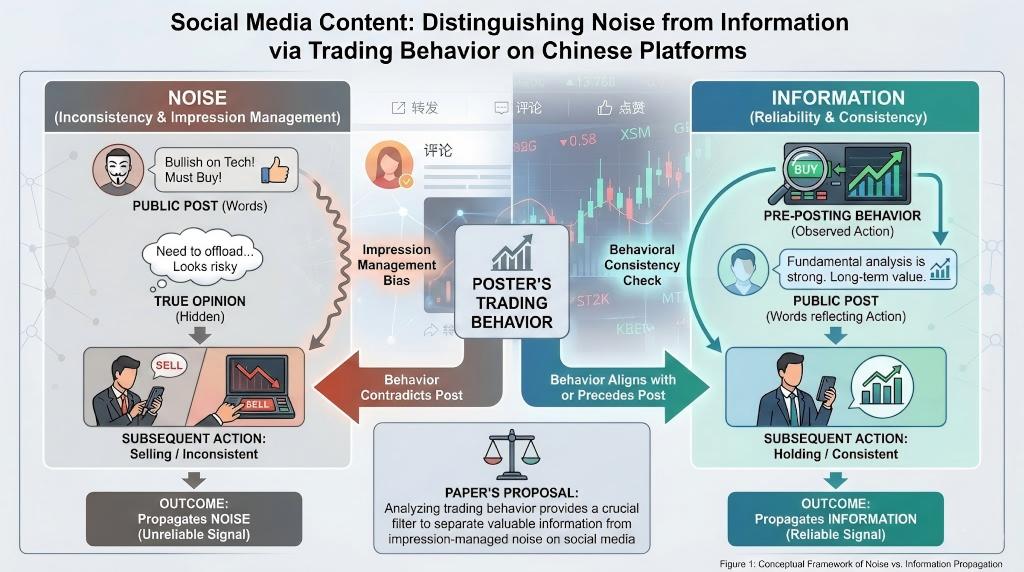

Do investors' actions speak louder than words?

The 21st Annual Conference on Financial Engineering and Risk Management, 2024

📊 Distinguishing noise from information through trading behavior

Examines whether posts on Chinese social media propagate noise or information, proposing that both coexist but can be distinguished by posters' trading behavior. Observing trading actions helps assess the reliability of expressed views.